Your CNI Replaced kube-proxy, Learned L7, and You Didn't Notice

eBPF datapath, identity-based policies, Hubble observability, egress gateways, and Cluster Mesh

In Issue #2, we talked about the mesh layer - how Istio Ambient killed the sidecar tax and moved L4/L7 processing into shared nodes. Today we go one level deeper. Into the CNI itself. The thing that actually moves packets between your pods.

This week: how Cilium replaced kube-proxy without anyone noticing, why iptables rules don’t scale, an observability layer that sees every single flow, and network policies that don’t care about IP addresses.

The Pattern: Cilium Replaces kube-proxy

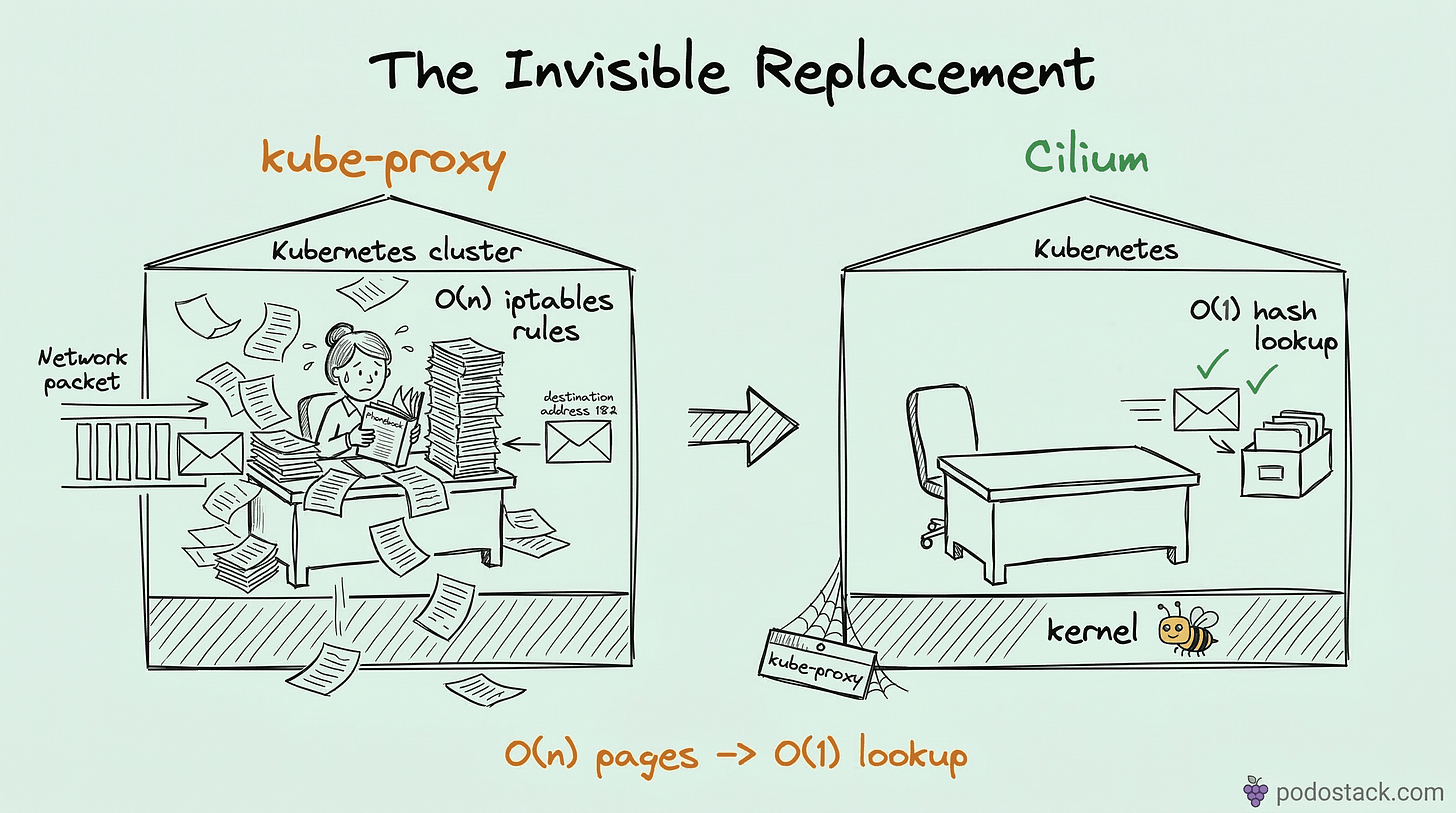

eBPF maps vs iptables chains. It’s not even close.

Here’s how kube-proxy works. Every time a Service changes, kube-proxy writes iptables rules. One rule per endpoint. A cluster with 5,000 Services and 10 pods each? That’s 50,000 iptables rules. Every packet walks the chain from top to bottom - O(n) linear traversal. Add a new Service, the entire chain gets rewritten. On large clusters, this takes seconds. During that rewrite window, connections can drop.

Cilium takes a different approach. It compiles eBPF programs and loads them directly into the kernel. Service-to-pod mappings go into eBPF hash maps - O(1) lookup. When a packet hits the network interface, the eBPF program intercepts it before iptables even sees it. One hash lookup, destination rewritten, packet forwarded. Done.

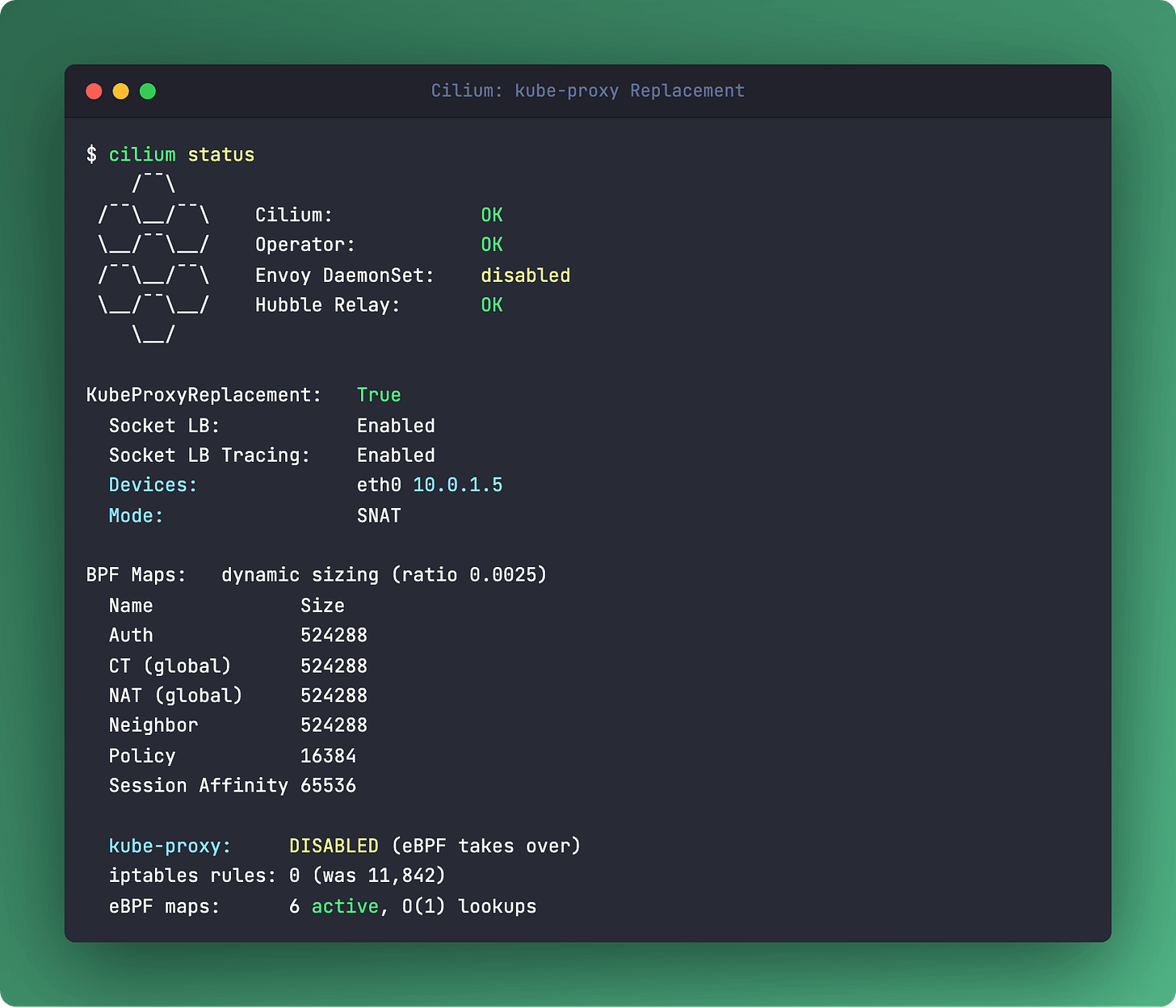

No chain traversal. No full-table rewrites. A Service update touches exactly one entry in the map. Install Cilium with kube-proxy replacement enabled:

# Helm values

kubeProxyReplacement: true

k8sServiceHost: "api-server.example.com"

k8sServicePort: "6443"The moment Cilium starts, kube-proxy becomes dead weight. You can remove it entirely. Most managed K8s providers (GKE, EKS, AKS) now offer Cilium as a built-in CNI option with kube-proxy replacement out of the box.

Links

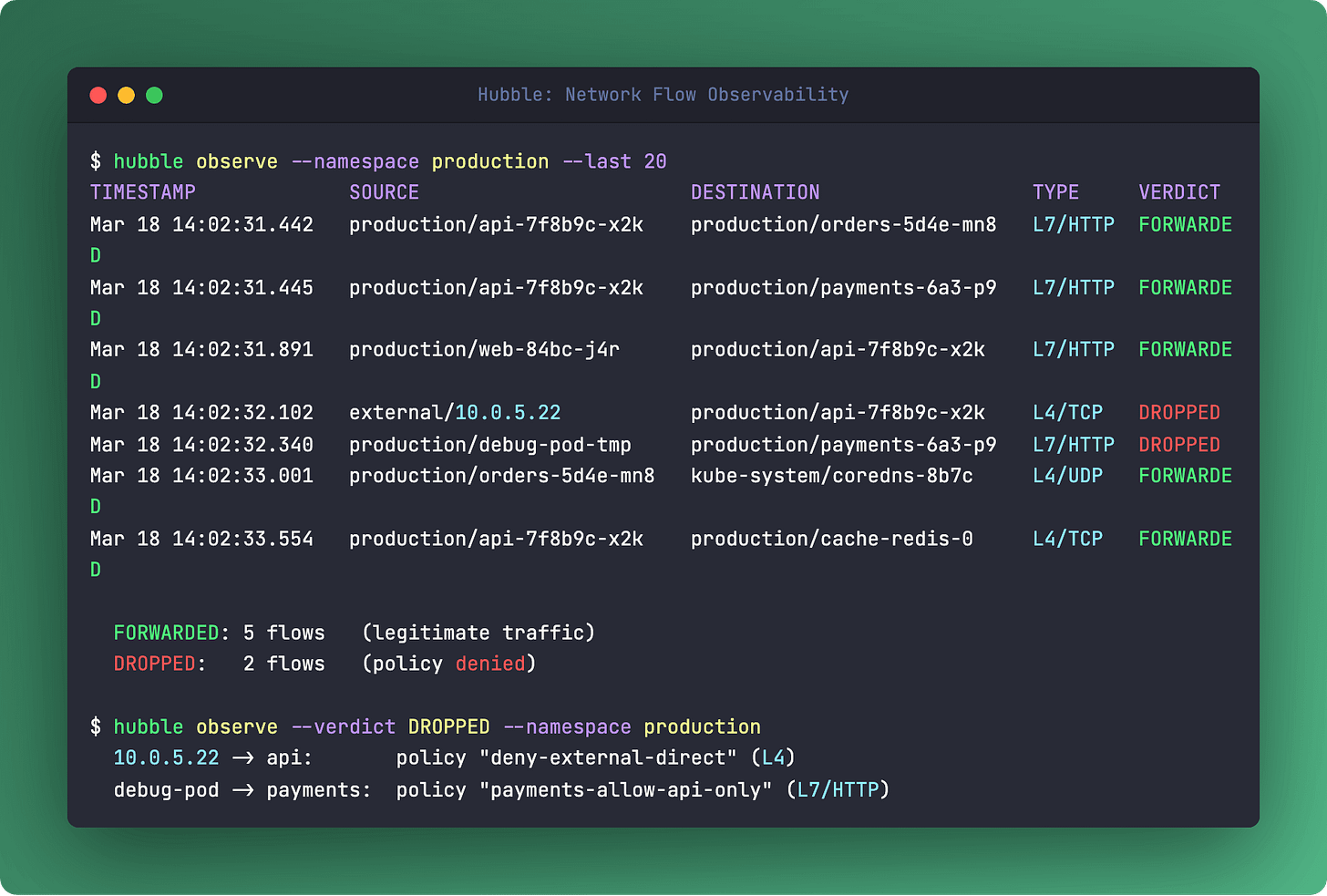

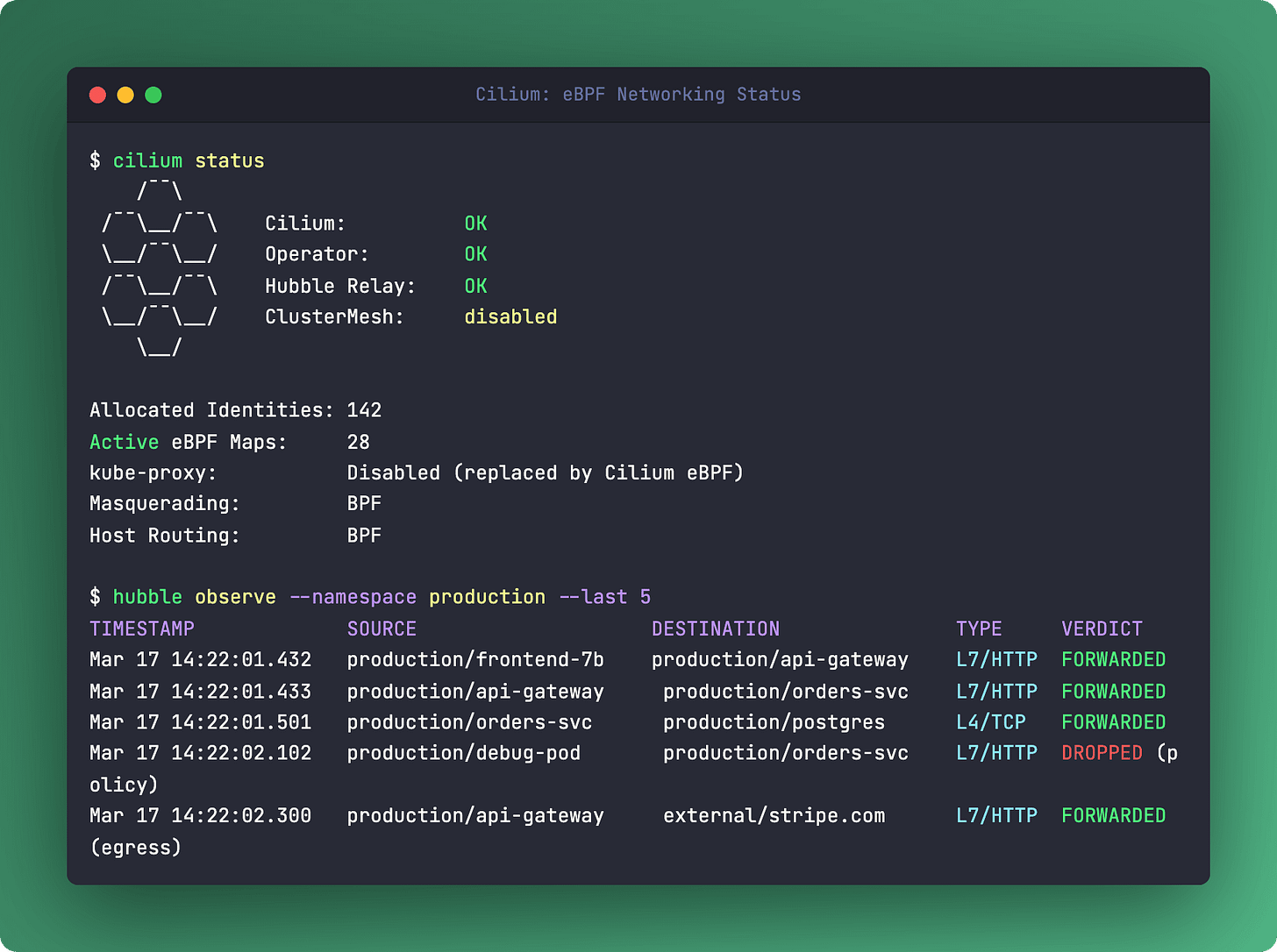

Hidden Gem: Hubble Observability

A security camera and a dispatcher for your cluster network.

Most CNIs are black boxes. Packets go in, packets come out. Something breaks, you stare at tcpdump output and hope for the best.

Hubble changes that completely. It’s Cilium’s built-in observability layer, and it sees everything - every L3/L4 connection, every L7 HTTP request, every DNS lookup, and every policy verdict. Not sampled. Every single flow.

hubble observe gives you a real-time stream of what’s happening. Filter by namespace, pod, verdict, protocol. See which policy blocked a request and why. The UI renders a live service map - no instrumentation, no sidecars, no agent to install. It’s all built on the same eBPF data path that’s already handling your packets.

Hubble also exports Prometheus metrics out of the box. HTTP request rates, DNS errors, policy drops - all without touching your application code. You get observability for free just by running Cilium.

Links

The Showdown: iptables vs eBPF

iptables (kube-proxy): O(n) chain traversal per packet. Full chain rewrite on Service update. Degrades at 5K+ Services. No built-in observability. Source IP lost with SNAT. No L7 awareness. Userspace rules.

eBPF (Cilium): O(1) hash map lookup. Single map entry update. Flat performance at any scale. Hubble sees L3-L7 flows. Source IP preserved natively. Full L7 awareness - HTTP, gRPC, Kafka, DNS. In-kernel programs.

The numbers tell the story. Benchmarks consistently show 2-3x lower latency and 40-50% less CPU usage at scale with Cilium’s eBPF datapath compared to iptables. But the real win isn’t raw performance - it’s that you stop losing visibility at the network layer. iptables is a firewall tool pretending to be a load balancer. eBPF is purpose-built for this job.

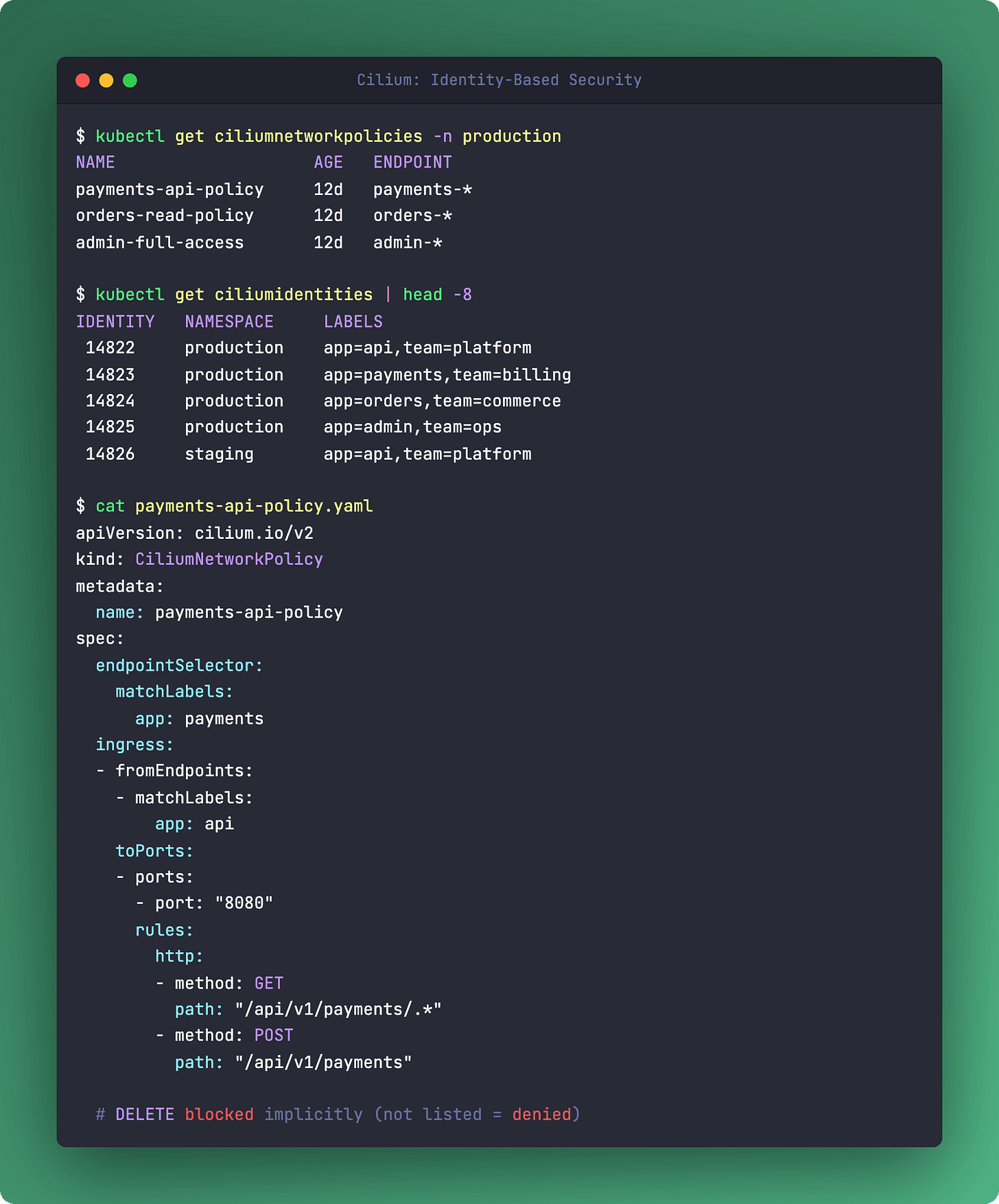

Deep Dive: Identity-Based Network Policies

Labels instead of IPs. Because pods don’t keep their addresses.

Traditional NetworkPolicy uses pod IP addresses under the hood. Pod restarts, gets a new IP, policy needs updating. Scale a deployment from 3 to 30 replicas - that’s 27 new IPs the policy engine needs to track. It works, but it’s fragile.

Cilium flips this. Every unique combination of labels gets a numeric identity. app=api, env=production becomes identity 54321. That number lives in an eBPF map. When a packet arrives, Cilium checks the source identity against the destination’s allowed identities. No IP lookups. Pods can restart, scale, migrate across nodes - the identity stays the same because the labels stay the same.

CiliumNetworkPolicy takes this further with L7 rules:

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

metadata:

name: api-policy

spec:

endpointSelector:

matchLabels:

app: api-gateway

ingress:

- fromEndpoints:

- matchLabels:

app: frontend

toPorts:

- ports:

- port: "8080"

rules:

http:

- method: GET

path: "/api/v1/.*"

- method: POST

path: "/api/v1/orders"Allow GET on /api/v1/*, allow POST on /api/v1/orders, deny everything else. At L7. In the kernel. No sidecar proxy needed for basic HTTP filtering.

Links

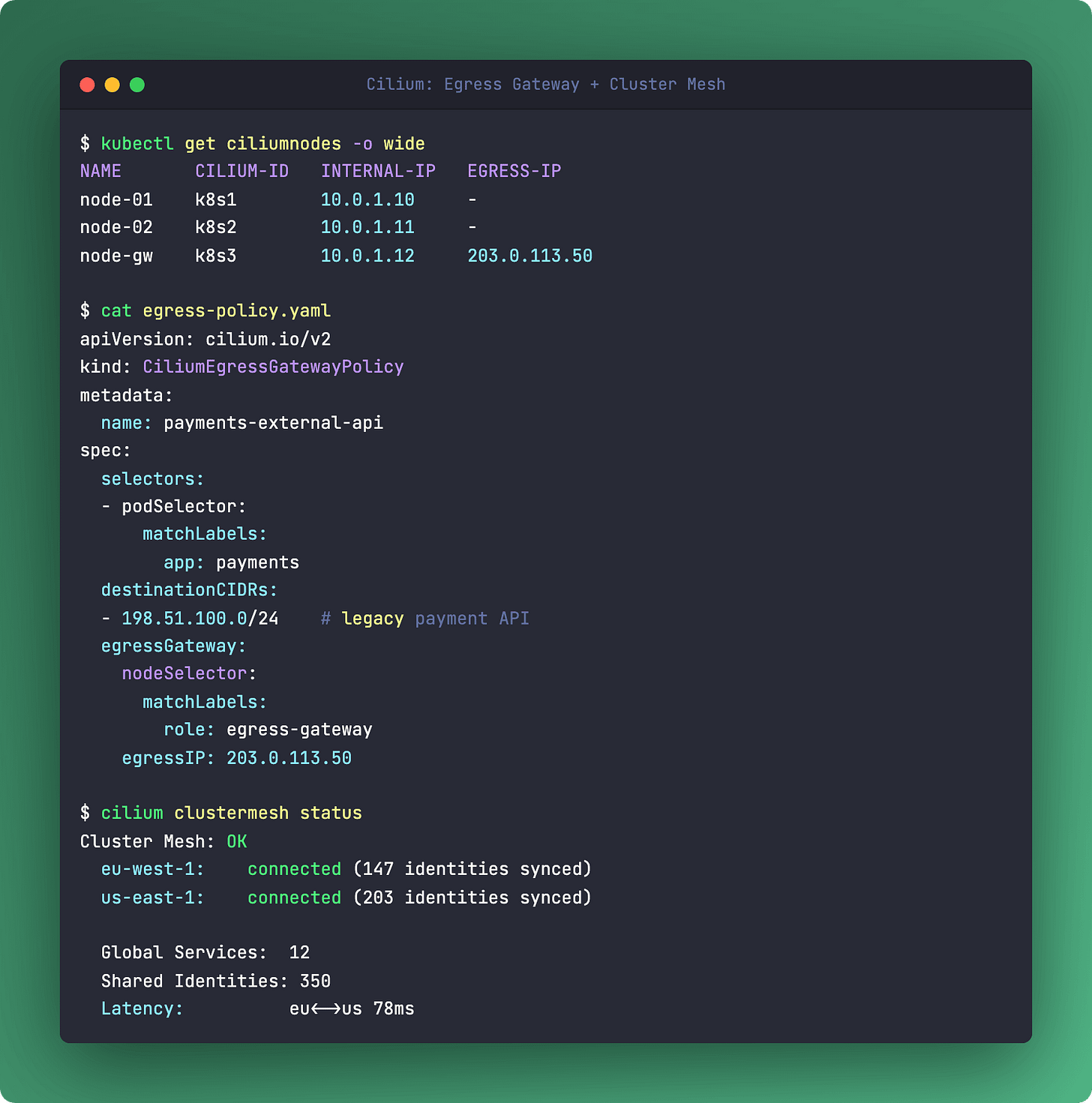

The One-Liner: Egress Gateway

apiVersion: cilium.io/v2

kind: CiliumEgressGatewayPolicy

metadata:

name: stable-egress

spec:

selectors:

- podSelector:

matchLabels:

app: payment-service

destinationCIDRs:

- "0.0.0.0/0"

egressGateway:

nodeSelector:

matchLabels:

egress-gateway: "true"

egressIP: "203.0.113.50"Your payment service talks to Stripe. Stripe whitelists your IP. Pods move between nodes, IPs change. Classic problem.

CiliumEgressGatewayPolicy solves it at the eBPF level. Matching traffic gets intercepted, tunneled to a designated gateway node, and SNATed with a stable IP. No cloud NAT gateway service, no extra hops through a proxy pod. The eBPF program handles the interception and tunneling directly in the kernel. One policy, one stable IP, every external API that needs IP whitelisting is covered.

Links

Hot Take: Cluster Mesh. Cilium doesn’t stop at one cluster. Cluster Mesh connects multiple clusters with shared identities and global services. A pod in cluster-east can reach orders-svc in cluster-west using the same identity-based policies. No VPN tunnels, no federation hacks. The eBPF identity model extends across cluster boundaries. If you’re running multi-cluster, this is worth a serious look.

Questions? Feedback? Reply to this email. I read every one.

Podo Stack - Ripe for Prod.