Firecracker: the minimalism that runs your Lambda function

How a Rust VMM with KVM, 125ms boot, and 5MB overhead per instance became the boundary nobody talks about

Every AWS Lambda function you've ever invoked ran inside a microVM that started in less than 125 milliseconds, used under 5 MB of memory for the VMM itself, and was destroyed when your function returned. That microVM runs on a piece of software called Firecracker, written in Rust, open-sourced in 2018, and now quietly sitting under Lambda, Fargate, Fly.io, Kata Containers, and half the serverless infrastructure that bills you for single-digit milliseconds at a time.

Most engineers have heard the name. Very few have looked at what Firecracker actually is, why it exists, and where it fits in the boundary-choice conversation that now dominates multi-tenant isolation, AI-agent sandbox platforms, and untrusted-code execution at scale.

Here's the full picture.

The dilemma that didn't have a clean answer

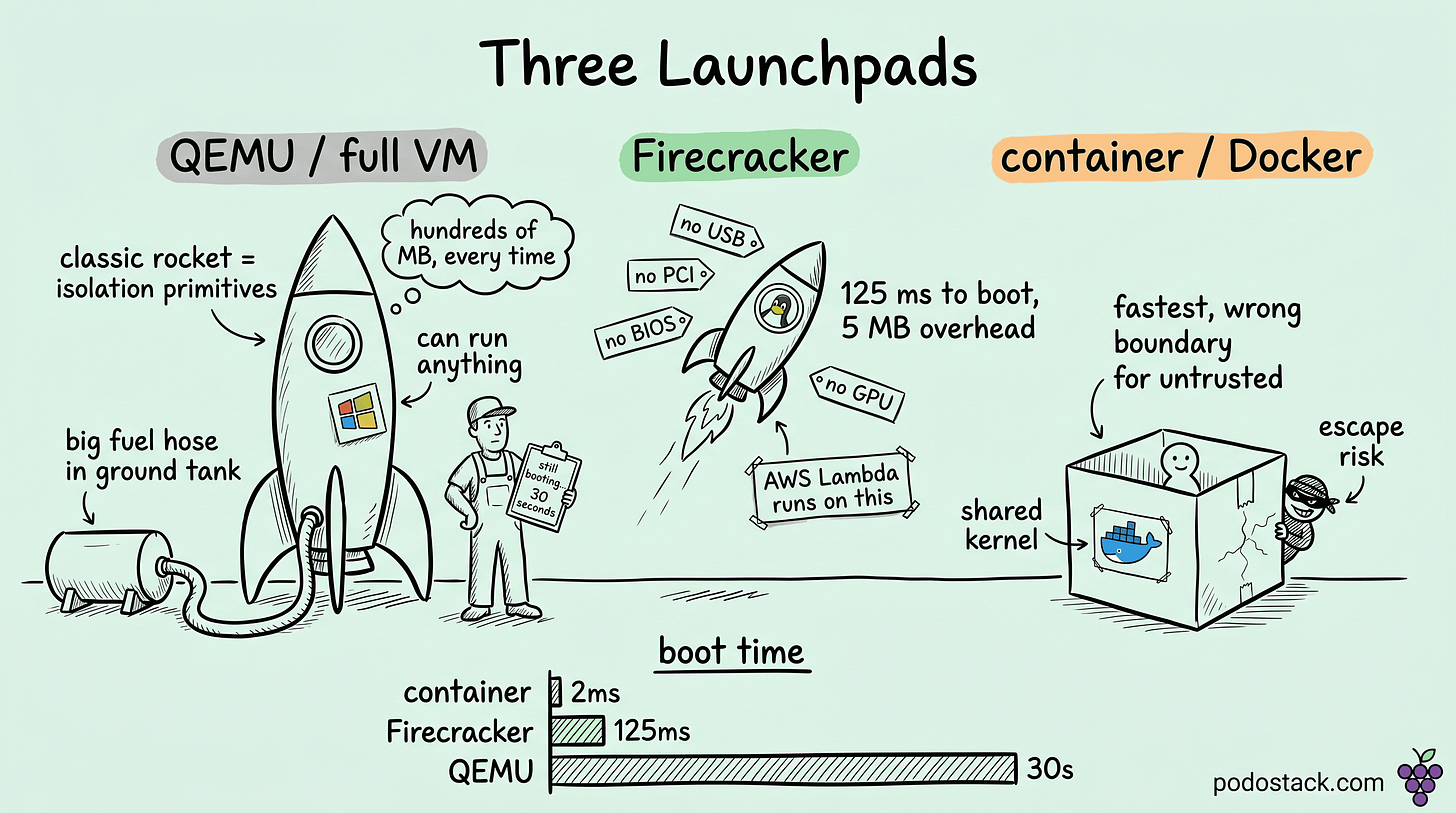

Before 2018, two ways to isolate arbitrary user code:

Containers (Docker, LXC). Fast start, high density, shared kernel with the host. Process-level isolation through namespaces and cgroups. Strong enough for internal workloads, weak for genuinely untrusted code. "Container escape" is a legitimate attack category.

Traditional VMs (QEMU/KVM). Hardware isolation through a hypervisor. Strong security. Slow start, measured in seconds or minutes. Memory overhead in the hundreds of MB per instance.

AWS needed something for Lambda. Thousands of untrusted functions from different tenants per physical host. Millisecond start times. Strict isolation. Nothing on the shelf fit. QEMU was too heavy. Containers weren't strong enough.

Firecracker is the answer AWS built. A VMM that keeps the hardware isolation of a VM but strips out everything that isn't needed for ephemeral stateless workloads.

What got cut

Firecracker is minimalism as a security feature. The things QEMU emulates that Firecracker refuses to:

No USB, no PCI bus, no BIOS, no graphics. A running microVM sees a minimal virtio-net device, a virtio-block device, a serial console, and one keyboard key for reboot. That's the full hardware surface.

No device passthrough. If you need a GPU, Firecracker is the wrong tool. Cloud Hypervisor or QEMU with VFIO handles that.

No live migration, no complex storage features, no snapshots (for a long time, though snapshots were added later).

No Windows guest support. Linux and OSv only.

Everything cut is attack surface removed. A minimal device model is a minimal set of bugs.

Links

The architecture that makes sub-second boot work

Three choices explain the performance:

Rust at the foundation. Memory safety guarantees eliminate a whole class of bugs that plagued C-based hypervisors. Firecracker's CVE list is noticeably shorter than QEMU's for this reason.

API-driven, not CLI-driven. Firecracker exposes a REST API over a Unix socket. You POST a machine configuration, POST a disk image path, POST a kernel image path, then send an InstanceStart action. No process spawning, no command-line parsing, no shell. Orchestrators build against the API directly.

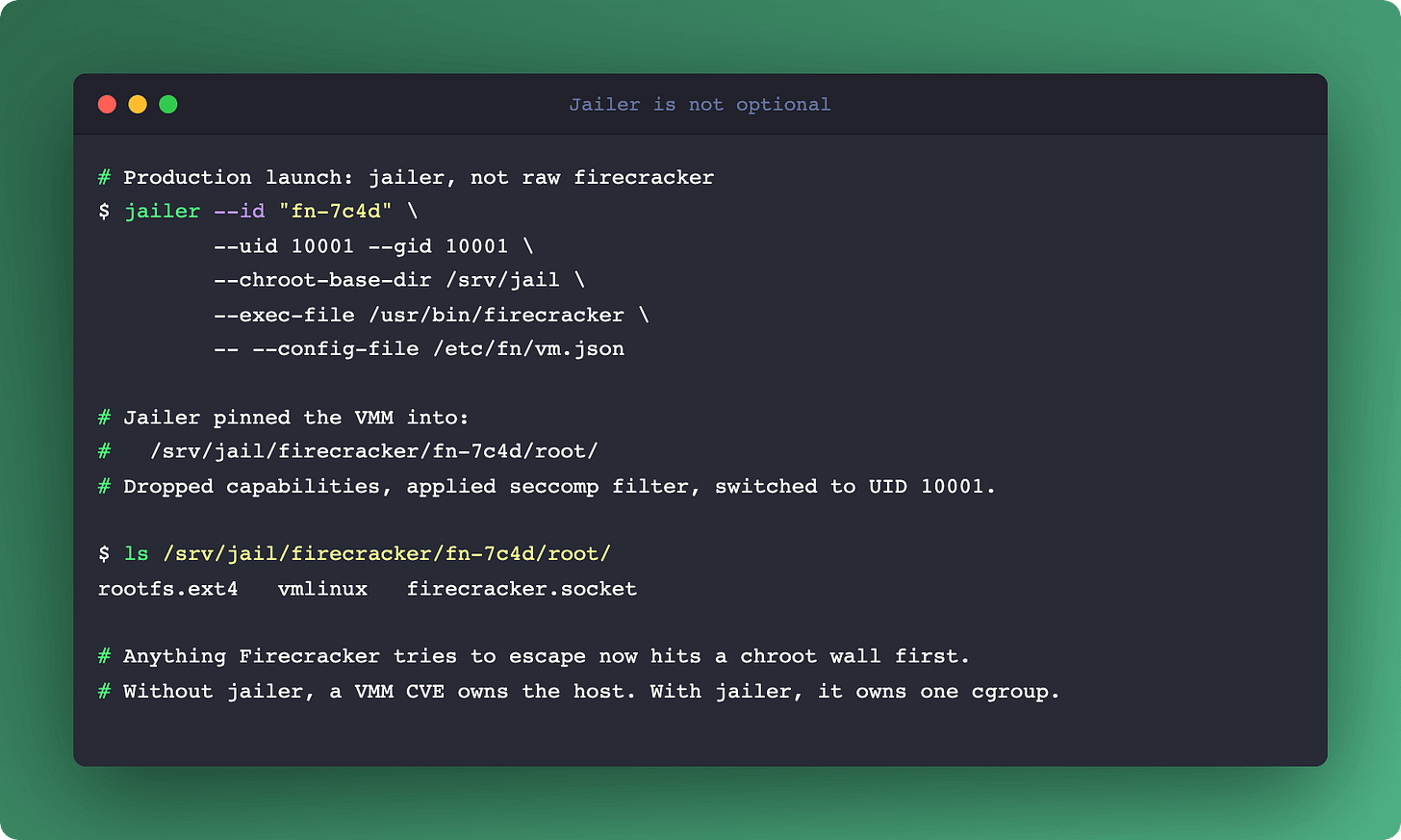

Jailer. A separate binary that sandboxes the Firecracker process itself using cgroups, namespaces, chroot, and seccomp-bpf syscall filters. If a guest escapes the microVM, it lands in a jailed process with minimal privileges. Two layers of isolation, both required.

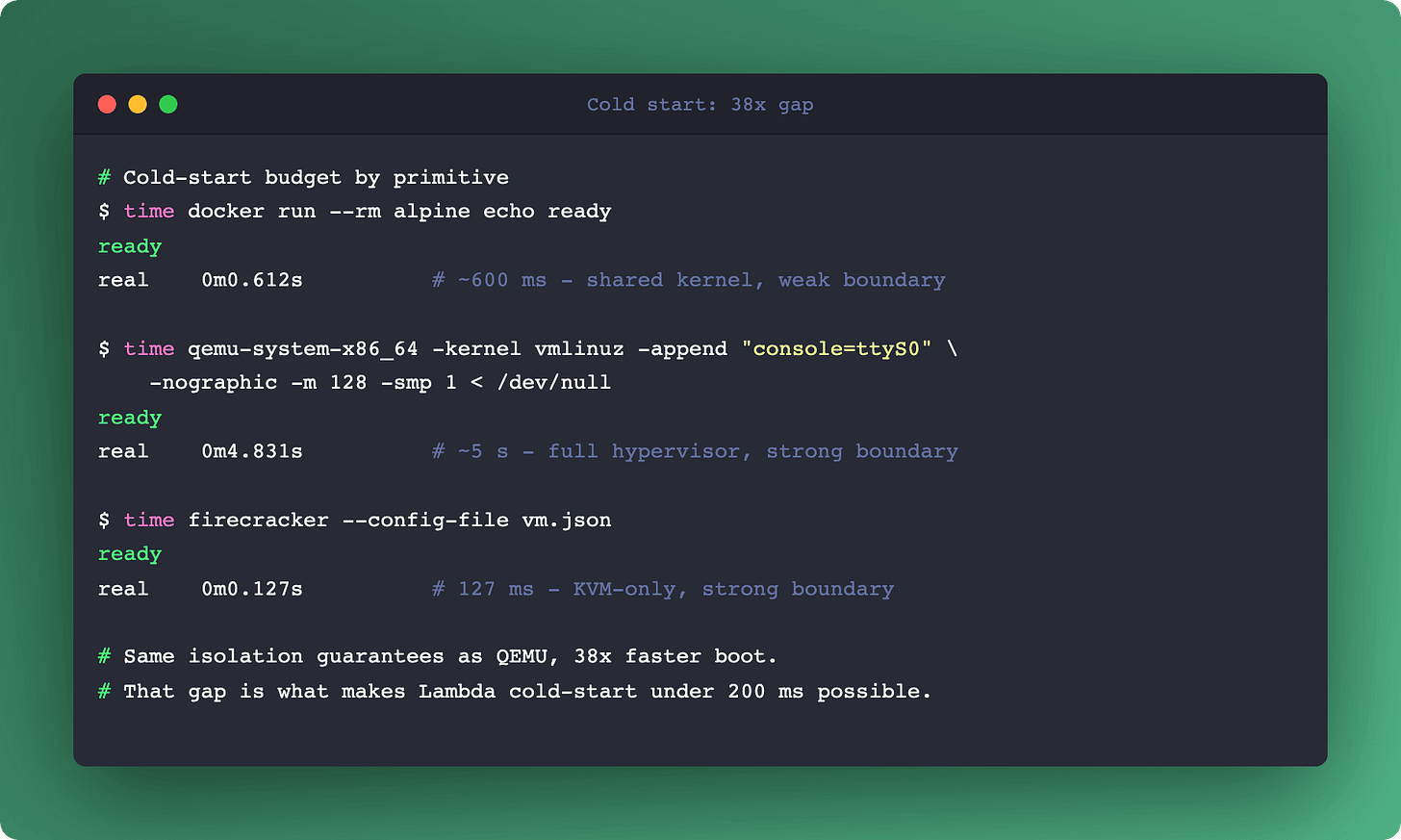

The boot numbers are the headline:

Startup to running guest code: under 125 ms.

VMM memory overhead: under 5 MB per microVM.

Density on i3.metal: thousands of microVMs per physical host.

Built-in rate limiting for network and block I/O at the VMM layer means noisy-neighbor problems don't propagate across microVMs sharing a host.

Where Firecracker actually runs in production

Four categories, plus the one people forget:

AWS Lambda. The default execution environment. Your function runs inside a Firecracker microVM that was ready before your request landed.

AWS Fargate. The task runtime for ECS and EKS. Firecracker under the hood, presenting a container API.

Fly.io. Entire platform built on Firecracker as the primitive.

Kata Containers. A CNCF-sandbox project that runs standard OCI containers inside lightweight VMs for stronger isolation. Kata supports multiple VMMs; Firecracker is one of the popular backends. If your cluster has

runtimeClassName: kata-fc, Firecracker is the boundary.AI-agent sandboxes and sandbox-as-a-service platforms. E2B, Daytona, Modal, and the emerging category of remote code-execution platforms all use Firecracker-derived microVMs for isolating tool calls from agents or untrusted snippets from customer tenants. Strong boundary plus millisecond start is the combination that makes the category work.

Links

Where Firecracker is the wrong tool

Three categories of workload that Firecracker can't handle:

GPU-dependent workloads. No PCI passthrough, no direct device access. ML inference and training stay on QEMU or bare metal.

Stateful databases. Disks are ephemeral by design. You can configure persistent storage, but you're working against the grain.

Windows or macOS guests. Not supported. Linux guest kernel only, with OSv as the other option for unikernel use cases.

If your use case needs any of these, look at Cloud Hypervisor (also Rust, newer, different design priorities), Kata with QEMU backend, or just QEMU directly.

The comparison that matters for platform decisions

A clean mental model for boundary strength:

Docker container - boundary: shared kernel + namespaces, start: ms, overhead: MB, untrusted-safe: NO

gVisor - boundary: userspace kernel, start: 100s of ms, overhead: tens of MB, untrusted-safe: yes (weak)

Firecracker microVM - boundary: KVM hypervisor, start: ~125 ms, overhead: 5 MB VMM, untrusted-safe: YES (strong)

QEMU VM - boundary: full hypervisor, start: seconds, overhead: 100s of MB, untrusted-safe: YES (strong)

The rows aren't interchangeable. Picking Firecracker over Docker is a conscious trade: stronger isolation for the overhead of a VMM. Picking Firecracker over QEMU is a different trade: less feature surface for faster boot and lower overhead.

For your own platform, the decision usually comes down to three questions:

Is the workload trusted or untrusted? (Trusted → Docker. Untrusted → microVM or VM.)

Does it need hardware passthrough or Windows? (Yes → QEMU. No → Firecracker.)

Does it need to start in under a second at scale? (Yes → Firecracker. No → QEMU is fine.)

Those three cover most real decisions.

Links

The operational pieces that aren't in the Getting Started docs

If you stand up Firecracker yourself (outside a managed platform), a few things matter:

Kernel choice for the guest. Firecracker documents a minimal Linux kernel config. Running a distro kernel inside works but wastes boot time. The "alpine-microvm" or custom-built minimal kernel is the right choice.

Jailer is not optional. Running Firecracker without the jailer is a production mistake. Every serious deployment runs jailer.

Snapshot/restore (added in later versions). Lets you pre-create a microVM in memory and restore it to a fresh copy in milliseconds. A key primitive for warm-pool patterns in FaaS, AI-agent sandbox platforms, and any sandbox-as-a-service that needs sub-second cold start.

Networking model. Firecracker expects a tap device per microVM. At density, this becomes a host-networking concern, not a VMM concern. Common pattern: one physical host with thousands of tap devices bridged through a fast virtual switch.

Links

Summary

Firecracker is what happens when a single use case (secure multi-tenant serverless) forces a complete rewrite of the VMM concept. The result is narrower than QEMU, stronger than a container, fast enough that boot latency stops dominating your worst-case tail.

If you're building a platform that runs untrusted code at scale, Firecracker belongs in your stack. If you're running standard workloads on standard clusters, Kata-with-Firecracker is an option for tenants you don't trust. And if you're watching the AI-agent sandbox and sandbox-as-a-service category, Firecracker-derived microVMs are the primitive that makes the rest of the security model work.

For the container-runtime context including the Wasm alternative at the other extreme of the boundary axis, see Tools From the Future. For K8s-native runtime options, eBPF Beyond Networking covers Tetragon as a complementary runtime-security primitive.